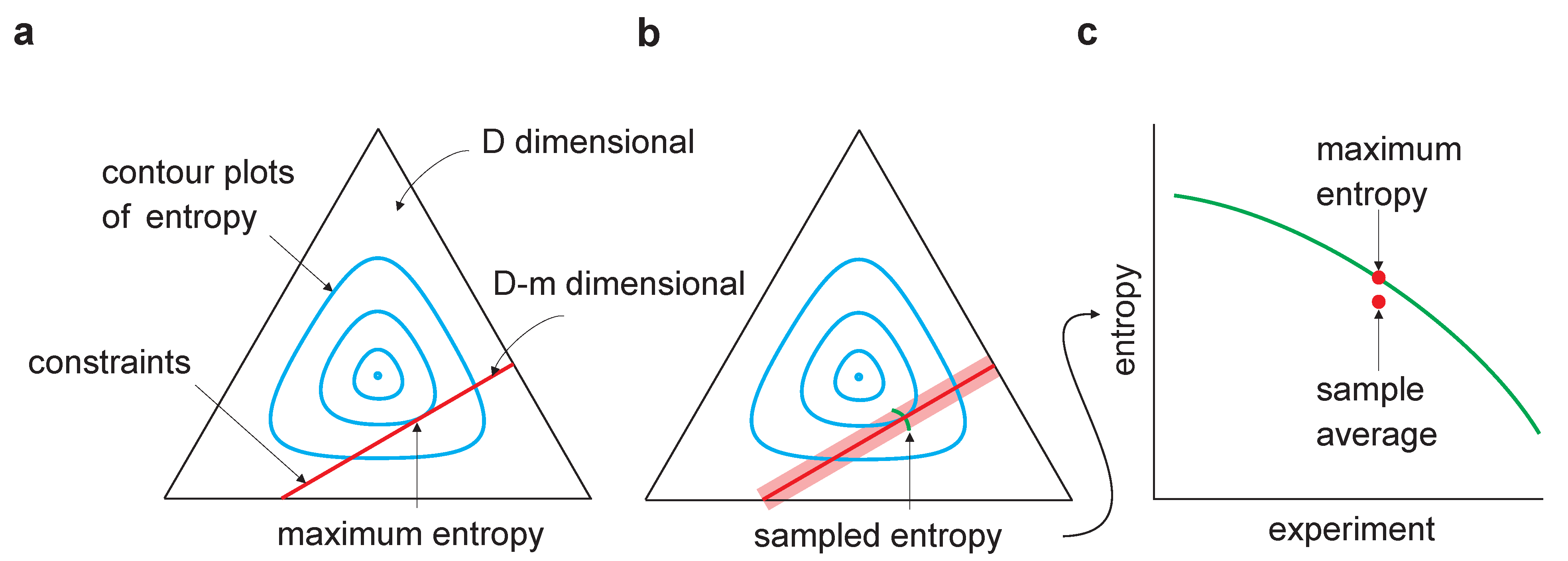

If you have no information about the probabilities on the results of an experiment, assume they are evenly distributed ( Laplace, 1814 ). This idea traces back to Laplace's recommendation for dealing formally with uncertainty, known as the principle of indifference: An important idea is that entropy is maximum if all possible results are equally probable. Examples of key ideas include that only the set probabilities, and not the labeling of possible results, affects entropy (these are sometimes called symmetry or permutability axioms) that entropy is zero if a possible result has probability one and that addition or removal of a zero-probability result does not change the entropy of a distribution. Many different axioms have been employed in defining mathematical measures of probabilistic entropy ( Csiszár, 2008).

Many different formulations of entropy, including and beyond Shannon, have been used in mathematics, physics, neuroscience, ecology, and other disciplines ( Crupi et al., 2018). Probabilistic entropy is often defined as expected surprise (or expected information content) the particular way in which surprise and expectation are formulated determines how entropy is calculated. This early work set the foundation for work in philosophy of science and statistics toward modern Bayesian Optimal Experimental Design theories ( Chamberlin, 1897 Good, 1950 Lindley, 1956 Platt, 1964 Nelson, 2005). Beyond the concept of information content itself, the concept of average information content, or probabilistic entropy, translates into measures of the amount of uncertainty in a situation.įinding sound methodologies for assessing and taming uncertainty ( Hertwig et al., 2019) is an ongoing scientific process, which began formally in the seventeenth and eighteenth centuries when Pascal, Laplace, Fermat, de Moivre and Bayes began writing down the axioms of probability. Formalizing these intuitive notions requires concepts from stochastics ( Information and Entropy). Intuitively, the lack of information is uncertainty, which can be reduced by the acquisition of information. Information is a concept employed by everyone. Despite not being formally instructed in probability or entropy, children were able to estimate and compare the difficulty of different probability distributions used for generating possible codes. Children prepared urns according to specified recipes, drew marbles from the urns, generated codes and guessed codes. Fourth grade schoolchildren (8–10 years) played a version of Entropy Mastermind with jars and colored marbles, in which a hidden code to be deciphered was generated at random from an urn with a known, visually presented probability distribution of marble colors. We introduce a set of playful activities aimed at fostering intuitions about entropy and describe a primary school intervention that was conducted according to this plan.

Today these concepts have come to pervade modern science and society, and are increasingly being recommended as topics for science and mathematics education.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed